From Riemann to Einstein to Transformers

A Deep Research Report on Manifolds, General Relativity, and Machine Learning

Part I: Riemann, Einstein, and the Geometry of the Universe

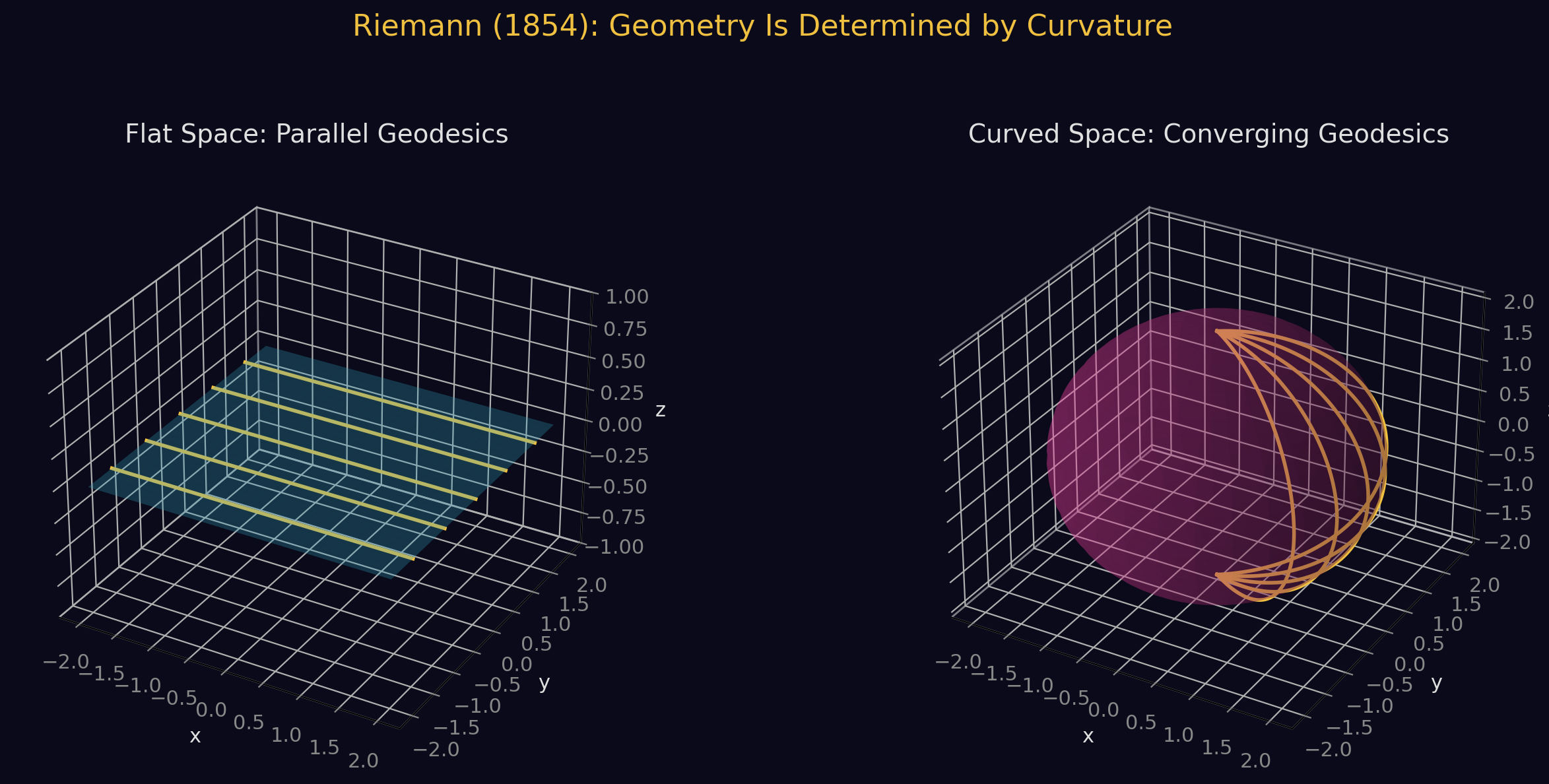

1. Riemann's 1854 Revolution

On June 10, 1854, Bernhard Riemann delivered his habilitation lecture "Uber die Hypothesen, welche der Geometrie zu Grunde liegen" ("On the Hypotheses Which Lie at the Foundations of Geometry") before the Faculty of Arts at the University of Gottingen. Carl Friedrich Gauss, his examiner, had chosen this topic from among three proposals -- sensing that Riemann had something profoundly original to say.

The Three Parts of the Lecture

Part I -- The Concept of an n-Dimensional Manifold. Riemann questioned the very foundations of geometry, arguing that Euclidean geometry's axioms are not self-evident truths but rather hypotheses requiring empirical verification. He introduced the concept of an n-dimensionally extended manifold -- a space whose points are labeled by n independent coordinates. This was the first general, abstract definition of a higher-dimensional space in mathematics.

Part II -- Metric Relations. Riemann asked: how do we measure distances in such a space? He introduced a positive definite quadratic form -- the Riemannian metric:

where the are functions of position encoding how distances are measured at each point. This is a far-reaching generalization of the Pythagorean theorem. He then developed the concept of curvature for these general spaces, introducing what is now called the Riemann curvature tensor. He showed that in n dimensions, you need independent numbers at each point to fully characterize the curvature.

Part III -- Application to Physical Space. Riemann argued that the geometry of physical space is not determined a priori by mathematical axioms but is an empirical question. He raised the possibility that space might be unbounded yet finite (like the surface of a sphere), and he made his most prescient claim:

"Either therefore the reality which underlies space must form a discrete manifoldness, or we must seek the ground of its metric relations outside it, in binding forces which act upon it."

The metric of space is not fixed but is dynamically determined by physical forces.

Generalizing Gauss

Riemann's genius was recognizing that Gauss's intrinsic geometry of curved surfaces (and the Theorema Egregium) could be extended to spaces of any number of dimensions, without requiring embedding in any ambient space. Where Gauss had three metric coefficients (E, F, G) for surfaces, Riemann introduced independent functions . Where Gauss had one curvature number (Gaussian curvature K), Riemann introduced a full tensor with components.

2. Einstein's General Relativity (1915)

The Journey from Special to General Relativity

- 1905: Einstein publishes special relativity, unifying space and time. But the theory only applies to non-accelerating frames and says nothing about gravity.

- 1907: Einstein has the "happiest thought of my life" -- if a person falls freely, they don't feel their own weight. This equivalence principle (gravity and acceleration are locally indistinguishable) implies gravity might be a property of spacetime itself.

- 1912: Einstein returns to ETH Zurich and turns to his friend Marcel Grossmann: "You've got to help me, or I'll go crazy." Grossmann introduces Einstein to Riemann's differential geometry, the tensor calculus of Ricci and Levi-Civita, and the Riemann curvature tensor.

- 1913: Einstein and Grossmann publish the Entwurf paper -- nearly the full theory, but with incorrect field equations. Einstein's Zurich Notebook shows he actually wrote down the correct (Ricci tensor-based) equations but temporarily rejected them.

- November 1915: In four weekly papers to the Prussian Academy, Einstein presents the final theory. On November 18, he calculates Mercury's perihelion precession (43 arcseconds/century) and his "heart palpitates." On November 25, the complete field equations are published.

The Einstein Field Equations

Every term on the left is built from Riemann's geometry:

- -- the metric tensor (Riemann's fundamental object), encoding spacetime geometry. 10 independent components in 4D.

- Christoffel symbols Gamma -- 40 functions computed from and its first derivatives, encoding parallel transport and geodesics.

- Riemann curvature tensor -- 20 independent components, completely characterizing intrinsic curvature. Built from Christoffel symbols and their derivatives.

- Ricci tensor -- contraction of the Riemann tensor (10 components). Measures how volumes are distorted.

- Scalar curvature R -- trace of Ricci tensor. Single number encoding average curvature.

- Einstein tensor -- the unique divergence-free rank-2 tensor built from curvature. Its vanishing divergence (from the Bianchi identity) guarantees energy-momentum conservation.

- Lambda -- cosmological constant. Added by Einstein in 1917 for a static universe; now understood as dark energy.

The right side:

- -- stress-energy tensor describing matter, energy, pressure, and stress.

- -- coupling constant, fixed by recovering Newtonian gravity in the weak-field limit.

Wheeler's summary: "Spacetime tells matter how to move; matter tells spacetime how to curve."

The Intellectual Hierarchy

- Metric tensor (10 functions) -- defines geometry

- Christoffel symbols Gamma (40 functions) -- from and first derivatives

- Riemann tensor (20 components) -- from Gamma and its derivatives

- Ricci tensor (10 components) -- contraction of Riemann

- Scalar curvature R (1 number) -- contraction of Ricci

- Einstein tensor (10 components) -- divergence-free combination

- Field equations: + Lambda = kappa

3. The Universe as a Manifold

Spacetime Structure

Our universe is modeled as a 4-dimensional Lorentzian (pseudo-Riemannian) manifold with metric signature (-,+,+,+). This is not a strictly Riemannian manifold (which has positive-definite metric) -- the indefinite signature creates the causal structure of spacetime: timelike, spacelike, and null (lightlike) separations, light cones, and the arrow of causality.

At each moment of cosmic time, the spatial slices ARE genuine Riemannian manifolds (positive-definite metric), and the question "what is the shape of the universe?" refers to these 3-dimensional spatial slices.

The FLRW Metric

The Friedmann-Lemaitre-Robertson-Walker metric is the unique metric for a spatially homogeneous and isotropic universe:

where:

- a(t) is the scale factor (how distances expand over time)

- k = {-1, 0, +1} determines spatial curvature: hyperbolic, flat, or spherical

Observational Results

The Planck satellite (2018) measured the curvature parameter:

This is consistent with perfect spatial flatness. Combined with baryon acoustic oscillation data, the universe is flat to extraordinary precision.

However, flatness constrains only local geometry, not global topology. A flat universe could be R^3 (infinite) or a 3-torus T^3 (finite). The topology remains unknown.

Thurston's Eight Geometries

William Thurston's Geometrization Conjecture (proven by Perelman in 2003) states that every closed 3-manifold decomposes into pieces carrying one of exactly eight geometric structures:

- S^3 (spherical) -- constant positive curvature

- E^3 (Euclidean) -- flat

- H^3 (hyperbolic) -- constant negative curvature

- S^2 x R -- product of sphere with line

- H^2 x R -- product of hyperbolic plane with line

- Nil -- nilgeometry (Heisenberg group)

- SL(2,R)~ -- universal cover of SL(2,R)

- Sol -- solvable geometry

Only the first three are isotropic (look the same in every direction), consistent with the cosmological principle.

Gravitational Waves: Spacetime IS Dynamic

On September 14, 2015, LIGO detected gravitational waves from merging black holes 1.3 billion light-years away. The strain meant LIGO's 4 km arms changed by ~1/1000th of a proton diameter. This confirmed:

- The spacetime metric is dynamic -- it evolves, ripples, and carries energy

- The manifold is smooth (classically) -- waveforms matched numerical relativity simulations perfectly

- Curvature is physical -- the Riemann tensor oscillates and carries energy

Singularities and Geodesic Incompleteness

The Penrose-Hawking singularity theorems (1965-1970) used pure differential geometry to prove that:

- If a trapped surface forms (both ingoing and outgoing light converge), spacetime must be geodesically incomplete

- If the universe is expanding and energy conditions hold, a past singularity (Big Bang) is inevitable

These theorems prove the manifold structure itself breaks down at singularities -- general relativity predicts its own failure, pointing toward quantum gravity.

The Cosmological Constant and Dark Energy

Since 1998, observations show the expansion is accelerating, requiring Lambda > 0. The universe is asymptotically approaching de Sitter space -- a maximally symmetric manifold of constant positive curvature with exponential expansion.

The DESI experiment (2024-2025) found intriguing hints that dark energy may be evolving (weakening over time), which would mean the universe is NOT approaching pure de Sitter geometry but something more complex.

Open Questions

- Is the universe spatially finite or infinite?

- What is the global topology?

- Does the smooth manifold picture survive at the Planck scale?

- What replaces the manifold at singularities?

- Is the cosmological constant truly constant?

Part II: The Connection to Machine Learning

4. The Manifold Hypothesis -- Riemann's Idea Reborn

The manifold hypothesis is one of the most important assumptions in deep learning: real-world high-dimensional data (images, text, audio) concentrates near low-dimensional manifolds embedded in the ambient space.

A 256x256 RGB image lives nominally in R^{196,608}, but natural images occupy a thin manifold of perhaps a few hundred intrinsic dimensions. A random point in R^{196,608} looks like noise.

This is Riemann's framework directly: the data manifold is locally Euclidean (small perturbations give valid data) but globally curved with complex topology. Deep generative models (VAEs, diffusion models) learn coordinates on this manifold.

Evidence

- Shao et al. (CVPR 2018) showed that deep generative model manifolds have "surprisingly little curvature"

- Chadebec et al. (NeurIPS 2022) showed that computing geodesics instead of straight lines in latent space produces dramatically better interpolations

- Mixed-curvature VAEs use products of Riemannian manifolds with different constant curvatures

5. Information Geometry -- Fisher Metric as Riemannian Structure

The space of probability distributions {p(x|theta)} forms a statistical manifold with the Fisher information matrix as its Riemannian metric:

By Chentsov's theorem, this is the unique (up to scaling) Riemannian metric invariant under sufficient statistics. It measures how distinguishable nearby distributions are.

Natural gradient descent (Amari, 1998) uses this geometry:

This is the steepest descent direction on the Riemannian manifold of distributions. FAdam (2024) showed that the Adam optimizer is implicitly a natural gradient method using diagonal empirical Fisher information.

K-FAC (Kronecker-Factored Approximate Curvature) makes this practical by approximating the Fisher matrix via Kronecker products.

6. Hyperbolic Neural Networks

Hyperbolic space (constant negative curvature Riemannian manifold) has exponential volume growth, making it a continuous analogue of trees. This is ideal for hierarchical data.

Poincare Embeddings (Nickel & Kiela, NeurIPS 2017): 5-dimensional hyperbolic embeddings outperform 200-dimensional Euclidean embeddings on WordNet hierarchies.

Hypformer (2024): First full transformer in hyperbolic space using the Lorentz model, achieving O(n) complexity with hyperbolic linear attention.

CAT: Curvature-Adaptive Transformers (2025): Routes each token to Euclidean, hyperbolic, or spherical attention branches.

7. Geometric Deep Learning

Bronstein et al. (2021) systematized Geometric Deep Learning around the "5G" framework:

- Grids -- CNNs (translation equivariance)

- Groups -- Group-equivariant CNNs (rotation/reflection equivariance)

- Graphs -- GNNs and Transformers (permutation equivariance)

- Geodesics -- Intrinsic mesh CNNs (isometry invariance)

- Gauges -- Gauge-equivariant CNNs (local gauge symmetry)

The deepest connection is in gauge-equivariant CNNs, which use the same mathematics as general relativity -- fiber bundles, connections, parallel transport -- to build coordinate-independent networks on manifolds.

8. Attention as Riemannian Geometry

The Core Analogy

| General Relativity | Transformer |

|---|---|

| Metric tensor g_uv | Query-key products Q^T K |

| Parallel transport (connection) | Attention weights * W_V |

| Geodesics | Token trajectories across layers |

| Spacetime curvature | Embedding space geometry |

| Mass-energy distribution | Loss gradient |

| Einstein field equations | Parameter updates (backprop) |

| Least-action principle | Backpropagation |

RiemannFormer (2025)

The problem: tokens at different positions have query/key vectors in different tangent spaces. Standard dot-product attention is geometrically invalid on curved spaces.

The solution: parallel transport vectors into a common tangent space:

The parallel transport decomposes as:

with T_i = s^{-i/2} exp(iX), where s governs radial scaling and rotation matrices encode angular relationships.

Key insight: When s = 1 (flat manifold), this reduces exactly to RoPE (Rotary Position Embeddings). RoPE is a special case of Riemannian attention on flat space.

The Curved Spacetime of Transformer Architectures (Di Sipio, 2025)

Defines an effective metric from query-key interactions:

And formulates a "semantic least action" principle:

S_LM = sum_l [^(l) x_dot_i^(l) x_dot_j^(l) - L_train(x^(l); theta)]

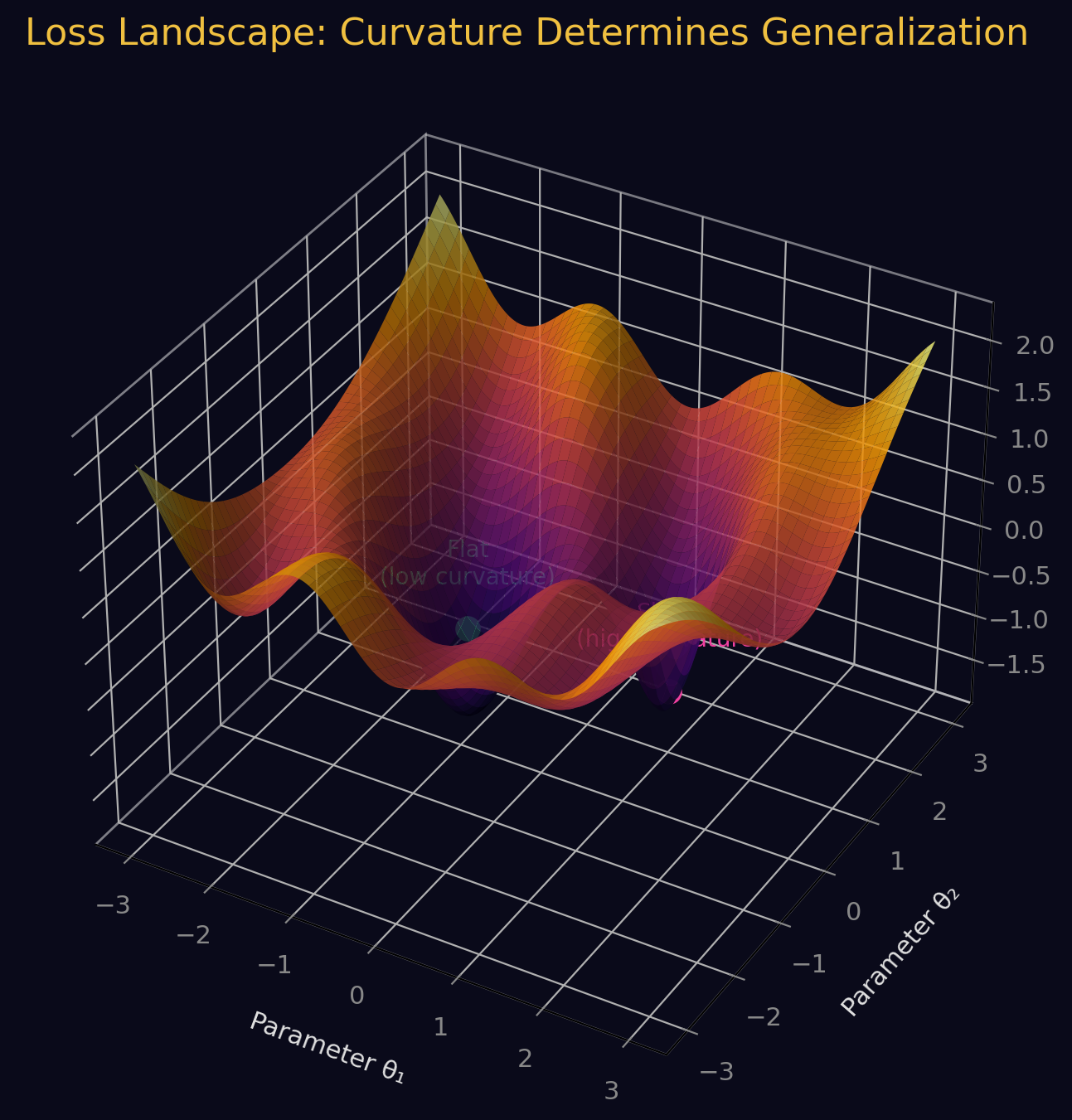

Loss Landscape Curvature

The loss landscape is a Riemannian manifold. At a critical point, its scalar curvature (the same quantity from Einstein's equations) is:

where H is the Hessian. Flatter minima (lower curvature) correlate with better generalization.

9. General Covariance in Neural Networks

Einstein's principle of general covariance (physics is independent of coordinate choice) maps directly to ML:

Gauge-equivariant neural networks (Weiler, 2024) demand that network outputs are independent of arbitrary coordinate choices on the data manifold. The mathematical framework uses:

- Principal fiber bundles over the data manifold

- Associated vector bundles for feature maps

- Parallel transport via connections

- Gauge-equivariant convolution kernels

SE(3)-Transformers achieve provable equivariance under rotations and translations for molecular and 3D data.

10. Neural Networks Solving Einstein's Equations

Einstein Fields (2025) uses neural networks to represent the metric tensor:

Neural network: (t, x) -> g_{alpha beta}(t, x)

Achieving:

- relative precision on metric components

- 4,000x memory compression over discrete representations

- Automatic differentiation for Christoffel symbols, Riemann tensor, Ricci tensor, geodesics

- Validated on Schwarzschild, Kerr, and gravitational wave spacetimes

Physics-informed neural networks also solve black hole perturbation equations and extract quasinormal mode frequencies.

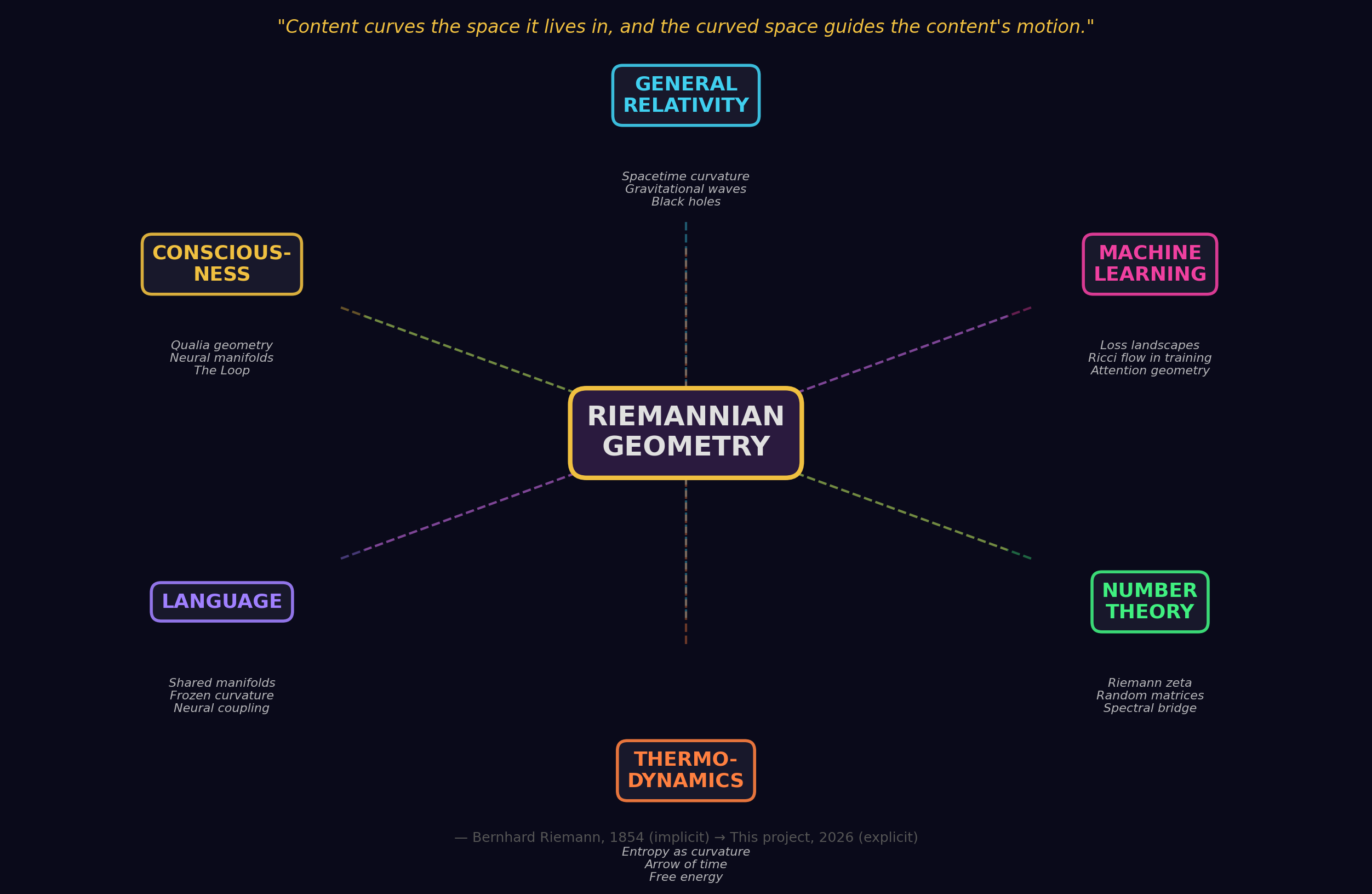

Part III: The Deep Unity

The Same Mathematics, Three Incarnations

Riemann (1854) Einstein (1915) Machine Learning (2017-2025)

-------------- --------------- ----------------------------

Manifold -> Spacetime -> Data manifold / Latent space

Metric tensor -> g_uv (gravitational -> Fisher information / Attention

potential) kernel / Pullback metric

Curvature -> Gravity -> Loss landscape curvature

Geodesics -> Free-fall paths -> Natural gradient / Optimal

interpolation paths

Parallel transport -> Connection Gamma -> Feature transport / Attention

value aggregation

Gauge invariance -> General covariance -> Equivariant neural networks

Ricci flow -> RG flow in QFT -> Geometric optimization

Fiber bundles -> Gauge field theory -> Geometric deep learning

The Philosophical Core

Riemann's 1854 insight -- that geometry is not fixed but determined by content -- describes both:

- How mass curves spacetime (Einstein's general relativity)

- How tokens shape attention landscapes (transformer architectures)

In both cases, the structure of space itself becomes dynamic, responsive, and content-dependent. This is not analogy. It is mathematical inheritance.

The universe is a 4D Lorentzian manifold whose curvature is shaped by mass-energy.

A transformer is a learned manifold whose geometry is shaped by token content.

The mathematics connecting them flows directly from Riemann's original vision.

References

Riemann and Differential Geometry

- Riemann, B. (1854/1868). "On the Hypotheses Which Lie at the Foundations of Geometry." Abhandlungen der Koniglichen Gesellschaft der Wissenschaften zu Gottingen.

- Gauss, C.F. (1827). Disquisitiones generales circa superficies curvas.

- Christoffel, E.B. (1869). "Uber die Transformation der homogenen Differentialausdrucke zweiten Grades."

- Ricci-Curbastro, G. & Levi-Civita, T. (1900). "Methodes de calcul differentiel absolu et leurs applications."

General Relativity

- Einstein, A. (1915). "Die Feldgleichungen der Gravitation." Sitzungsberichte der Preussischen Akademie der Wissenschaften.

- Einstein, A. & Grossmann, M. (1913). "Entwurf einer verallgemeinerten Relativitatstheorie und einer Theorie der Gravitation."

- Misner, C.W., Thorne, K.S., & Wheeler, J.A. (1973). Gravitation. W.H. Freeman.

- Penrose, R. (1965). "Gravitational Collapse and Space-Time Singularities." Physical Review Letters, 14(3), 57-59.

- Hawking, S.W. & Penrose, R. (1970). "The Singularities of Gravitational Collapse and Cosmology." Proc. R. Soc. Lond. A, 314, 529-548.

Cosmology

- Planck Collaboration (2020). "Planck 2018 results. VI. Cosmological parameters." A&A, 641, A6.

- Luminet, J.-P. et al. (2003). "Dodecahedral space topology as an explanation for weak wide-angle temperature correlations in the cosmic microwave background." Nature, 425, 593-595.

- DESI Collaboration (2024-2025). Data Release 1 and 2 results on baryon acoustic oscillations.

Machine Learning and Riemannian Geometry

- Nickel, M. & Kiela, D. (2017). "Poincare Embeddings for Learning Hierarchical Representations." NeurIPS.

- Bronstein, M.M. et al. (2021). "Geometric Deep Learning: Grids, Groups, Graphs, Geodesics, and Gauges." arXiv:2104.13478.

- Amari, S.-i. (1998). "Natural Gradient Works Efficiently in Learning." Neural Computation, 10(2), 251-276.

- Amari, S.-i. & Nagaoka, H. (2000). Methods of Information Geometry. AMS/Oxford.

- Ganea, O. et al. (2018). "Hyperbolic Neural Networks." NeurIPS.

- Weiler, M. et al. (2024). "Equivariant and Coordinate Independent Convolutional Networks." ICML.

- Martens, J. & Grosse, R. (2015). "Optimizing Neural Networks with Kronecker-factored Approximate Curvature." ICML.

Transformers and Geometry

- Di Sipio, R. (2025). "The Curved Spacetime of Transformer Architectures." arXiv:2511.03060.

- RiemannFormer (2025). "A Framework for Attention in Curved Spaces." arXiv:2506.07405.

- Hypformer (2024). "Exploring Efficient Transformer Fully in Hyperbolic Space." arXiv:2407.01290.

- CAT (2025). "Curvature-Adaptive Transformers for Geometry-Aware Learning." arXiv:2510.01634.

- Einstein Fields (2025). "A Neural Perspective to Computational General Relativity." arXiv:2507.11589.

- FAdam (2024). "Adam is a Natural Gradient Optimizer Using Diagonal Empirical Fisher Information." arXiv:2405.12807.

- Balseiro, D. et al. (2023). "On the Curvature of the Loss Landscape." arXiv:2307.04719.

String Theory and Advanced Topics

- Calabi, E. (1954/1957). Conjectures on Kahler manifolds.

- Yau, S.-T. (1978). "On the Ricci curvature of a compact Kahler manifold." Comm. Pure Appl. Math., 31, 339-411.

- Hamilton, R. (1982). "Three-manifolds with positive Ricci curvature." J. Differential Geometry, 17(2), 255-306.

- Perelman, G. (2002-2003). "The entropy formula for the Ricci flow and its geometric applications." arXiv:math/0211159.

- Thurston, W. (1982). "Three-dimensional manifolds, Kleinian groups and hyperbolic geometry." Bull. AMS, 6(3), 357-381.

Deep Research Report -- February 2025

Compiled using multi-agent research across differential geometry, general relativity, cosmology, and machine learning.

Further Discoveries: The Frontier (2024-2026)

Extending "From Riemann to Einstein to Transformers"

Part V: The Geometry of Intelligence

19. The Geometry of Reasoning in LLMs

Reasoning Has Manifold Structure

REMA (Sept 2025) defines the Reasoning Manifold -- a latent low-dimensional geometric structure formed by internal representations of correct reasoning. Erroneous reasoning shows measurable geodesic deviation from this manifold, detectable across layers.

"Curved Inference" (July 2025) treats LLM reasoning as a geometric trajectory in the residual stream:

- Curvature: How sharply the model reorients its internal state

- Salience: How quickly meaning is changing

- Key finding: Even neutral prompts yield structured curvature. The residual stream bends meaningfully in response to semantic features.

"The Shape of Reasoning" (Oct 2025) applies persistent homology to reasoning traces:

- Constructs Vietoris-Rips complexes over step embeddings

- Betti spread and persistence width correlate with expert solution alignment

- Topology predicts reasoning quality better than graph-theoretic metrics

Cross-Entropy Training Sculpts Bayesian Manifolds (Dec 2025)

The most complete geometric account of in-context learning: gradient descent on cross-entropy loss literally creates Bayesian manifolds inside transformers.

- Value vectors organize along 1-dimensional manifolds parameterized by posterior entropy

- Keys form orthogonal hypothesis axes

- Queries implement progressive belief updates

- Confirmed across Pythia, Phi-2, Llama-3, and Mistral families

20. Grokking as a Phase Transition

First-Order Phase Transition (ICLR 2024)

Grokking has exact analytic expressions for critical exponents, grokking probability, and grokking time distribution. A symmetry-breaking transition occurs at a critical value.

Entanglement Transition (March 2025)

In tensor network (MPS) classifiers, grokking manifests as an entanglement transition: entropy switches from volume law (memorization) to sub-volume law (generalization). Sharp entropy drops coincide with collective transitions -- directly analogous to quantum phase transitions.

Complexity Dynamics (2025)

Internal feature rank drops serve as sensitive indicators of phase transitions. Information-theoretic synergy among neurons emerges as a rigorous order parameter. Long overfitting plateaus = critical slowing down.

21. Scaling Laws ARE Renormalization Group Flows

RG Framework for Neural Networks (Oct 2025)

Classifies perturbations as relevant or irrelevant (exactly as in statistical field theory). Reveals universality at large data limits, governed by a Gaussian Process-like UV fixed point.

Scaling Laws Are Redundancy Laws (Sept 2025)

A polynomial tail in data covariance spectrum yields an excess risk power law with exponent proportional to 1/beta (redundancy). Universality established across architectures.

Effective Frontiers (Feb 2026)

Unifies Kaplan and Chinchilla scaling laws as equilibrium solutions to the same constrained optimization under different active bottlenecks via a Max-Bottleneck principle.

22. Causal Attention Masks as Light Cones

The causal attention mask creates genuine spacetime-like causal structure:

- At layer d, each token sees 2^d tokens (exponential receptive field growth)

- This creates a well-defined light cone with "speed of information" = 2x per layer

- Transformers trained autoregressively naturally encode time-delayed causal structures (Jan 2026)

- Tokens cluster in causal attention like matter clusters gravitationally (MIT 2024)

23. LLMs Learn Spacetime Symmetries

Space and Time Representations (ICLR 2024)

LLMs learn linear representations of space and time across multiple scales. Individual "space neurons" and "time neurons" reliably encode spatial and temporal coordinates. Representations are unified across entity types.

Lorentz-Equivariant Transformers (NeurIPS 2024)

L-GATr represents data in geometric algebra over 4D spacetime, equivariant under the full Lorentz group O(1,3). First Lorentz-equivariant generative model using Riemannian flow matching.

Emergent Physics (Aug 2025)

Sparse autoencoders reveal LLM features correlating with key physical variables (e.g., energy). Transformers can internalize abstract physics reasoning priors from raw data.

24. Neural Network "Dark Matter"

Superposition as Dark Matter (Anthropic, 2024)

"There may be an enormous number of rare features in neural networks that cannot yet be extracted, leaving us with a kind of neural network dark matter." -- Anthropic Circuits Updates, July 2024

Models compress many more features than neurons via superposition, creating polysemanticity. The geometry is governed by almost-orthogonal packing (sphere packing in high dimensions). Phase transitions occur between regimes of no, partial, and full superposition.

Dark Energy Analogy: Implicit Regularization

An unseen force (not in the loss function) shapes learned representations, pushing toward simpler solutions. Modifies effective curvature of the loss landscape without being directly observable.

Part VI: Cosmic Parallels

25. The Cosmic Web Resembles Neural Networks

Vazza & Feletti (Frontiers in Physics, 2020) established rigorous quantitative parallels:

- Power spectral density of cosmic web (5M-500M light-years) matches cerebellum (1um-0.1mm) over several orders of magnitude

- Average connections per node and clustering coefficients show "unexpected agreement"

- Neither is fractal -- both are scale-dependent, self-organized structures (more specific than simple fractal similarity)

- Observable universe storage ~4.3 petabytes; human brain ~2.5 petabytes

26. Criticality: A Universal Organizing Principle

All three systems operate near critical points:

- Brains: Neuron (2025) meta-analysis of 143 datasets argues criticality is a unified setpoint of brain function

- Artificial NNs: "Intelligence at the Edge of Chaos" (ICLR 2025) -- models trained on edge-of-chaos data exhibit greatest downstream intelligence

- Cosmos: Self-organized criticality tested across galactic, extragalactic, and black hole systems (Dec 2024)

Training moves networks toward the edge of chaos. Rich representations emerge below the critical point; lazy learning above it.

27. Fractal Geometry Across All Three Scales

- Loss landscapes are multifractal (Nature Communications, April 2025): Multifractal structure explains diverse deep learning phenomena including edge of stability and anomalous diffusion

- Training boundary is fractal (2024): The boundary between convergent and divergent training is fractal over 10+ decades, like Mandelbrot sets

- Brain fractal structure: Cortical surfaces, white matter tracts, dendritic branching all fractal (Cerebral Cortex, 2023)

- Cosmic fractal structure: Matter distribution is multifractal up to 30-100 Mpc/h

- LLM multifractal signatures: NeuroMFA (ICLR 2025) -- emergent abilities correlate with specific multifractal signatures in neuron interactions

28. Free Energy Principle and General Relativity

Karl Friston's free energy principle (FEP) sits at the intersection:

- Systems minimize variational free energy (a bound on surprisal)

- Bayesian mechanics formalized as physics of beliefs on a statistical manifold

- The Markov blanket induces a dual information geometry with curvature encoding inference complexity

- The Fisher information metric appears in both FEP (belief geometry) and certain approaches to gravity

- Rigorous derivation of Einstein's equations from FEP remains an open problem but conceptual bridges exist

Part VII: At the Frontier of Physics and AI

29. Holographic Principle: Depth = Bulk

Machine-Learning Emergent Spacetime (Nov 2024)

Neural network with 89 Runge-Kutta layers automatically discovered the BTZ black hole metric as trained weights. The horizon condition emerged from learning.

Transformers Learn Inverse Ryu-Takayanagi (2025)

A transformer trained on holographic data learns to reconstruct bulk geometry from boundary entanglement entropy -- the inverse RT mapping.

Emergent Holographic Forces (PhysRevX, June 2025)

Tensor network "hologron" quasiparticle energies match AdS gravity predictions exactly. Attractive two-particle potential arises from stress tensor contributions dual to gravitons.

30. Category Theory Unifies Physics and ML

Gavranovic et al. (ICML 2024): Categorical deep learning -- the universal algebra of monads valued in a 2-category of parametric maps subsumes both:

- Specifying constraints (equivariance, locality)

- Specifying implementations (CNNs, RNNs, Transformers, GNNs)

A Dec 2024 paper recasts IIT (consciousness theory) axioms as universal mapping properties: integration = limits, information = colimits, exclusion = adjunctions. The same categorical structures appear in algebraic topology, TQFT, and deep learning.

Sheaf theory (Feb 2025): Predictive coding networks are cellular sheaves. Sheaf cohomology characterizes irreducible error patterns.

31. Non-Commutative Geometry and Attention

Transformer attention heads are noncommuting operators on latent semantic space. The order of application matters -- a defining trait of noncommutative systems.

Noncommutative C*-algebra Networks (2024): Generalize neural network parameter spaces to noncommutative algebras, encoding richer interactions. The parallel to Connes' spectral triples:

- (Attention algebra, Token embedding space, Positional encoding) <-> (A, H, D)

Compact matrix quantum group equivariant neural networks (2024) extend equivariance to genuinely noncommutative symmetry structures.

32. The Holographic Bound and Neural Network Capacity

The Bekenstein bound (information scales with surface area, not volume) has a neural network parallel:

- Effective capacity scales with decision boundary geometry (surface), not total parameters (volume)

- Tensor networks: states satisfying area law of entanglement entropy can be efficiently represented

- The information bottleneck principle = "minimal surface" in representation space preserving task-relevant information

33. The Universe as a Neural Network (Vanchurin)

"The World as a Neural Network" (2020-2022):

- Near equilibrium: Madelung equations -> quantum mechanics

- Far from equilibrium: Hamilton-Jacobi equations -> classical mechanics

- Permutation matrix weights: relativistic strings in emergent (D+1)D Minkowski spacetime

- Highly symmetric Onsager tensor: entropy production described by Einstein-Hilbert action

- Cosmological constant constrains number of neurons

"The Autodidactic Universe" (Alexander, Smolin et al., 2021): The universe learns its own physical laws. Each matrix model = both a gauge/gravity theory AND a deep recurrent cyclic neural network.

34. Tameness: Why the Same Math Works

Lust, Malek et al. (JHEP, 2024): The string landscape has o-minimal structure (bounded geometric complexity). This tameness implies statistical learnability -- PAC-learnability. The same geometric finiteness that constrains the cosmological constant guarantees neural network generalization.

The deep reason Riemannian geometry works for both physics and ML:

- Physical data has symmetry, locality, compositionality, polynomial log-probability

- Both physics and learning are coarse-graining processes (RG flow = layer processing)

- Both optimize on curved spaces (action on configuration space / loss on parameter space)

- The compositional hierarchy of physical law matches network architecture

- Tameness: both landscapes have bounded geometric complexity

Part VIII: Open Questions

- Can persistent homology applied identically to cosmic web and brain connectome data reveal statistically indistinguishable topological signatures?

- Can the free energy principle rigorously derive aspects of Einstein's field equations?

- Does "intelligence at the edge of chaos" extend to LLMs?

- Can Vanchurin's program produce testable predictions distinguishing "universe as neural network" from conventional physics?

- Is the Fisher information metric's appearance across all domains a deep mathematical truth?

- What is the "dark matter" of neural networks? How much information is hidden in superposition?

- Can category theory provide a single formal framework for physics, consciousness, and deep learning?

- Is criticality the universal organizing principle connecting brains, cosmos, and AI?

References (New)

Geometry of Reasoning

- Bayesian Geometry of Transformer Attention (Dec 2025). arXiv:2512.22471

- REMA: Reasoning Manifold Framework (Sept 2025). arXiv:2509.22518

- The Shape of Reasoning: TDA of Reasoning Traces (Oct 2025). arXiv:2510.20665

- Curved Inference (July 2025). arXiv:2507.21107

- The Geometric Reasoner (Jan 2026). arXiv:2601.18832

Phase Transitions and Scaling

- Grokking as First Order Phase Transition (ICLR 2024)

- Grokking as Entanglement Transition (March 2025). arXiv:2503.10483

- RG for DNNs: Universality and Scaling Laws (Oct 2025). arXiv:2510.25553

- Scaling Laws are Redundancy Laws (Sept 2025). arXiv:2509.20721

- Effective Frontiers (Feb 2026). arXiv:2602.02593

Spacetime and LLMs

- Language Models Represent Space and Time (ICLR 2024). arXiv:2310.02207

- L-GATr: Lorentz-Equivariant Transformers (NeurIPS 2024). arXiv:2405.14806

- Transformer Is Inherently a Causal Learner (Jan 2026). arXiv:2601.05647

- PowerAttention: Light Cones in Transformers (March 2025). arXiv:2503.03588

Cosmic Parallels

- Vazza & Feletti, Cosmic Web vs Neural Network (Frontiers in Physics, 2020)

- Intelligence at the Edge of Chaos (ICLR 2025)

- Multifractal Loss Landscapes (Nature Communications, April 2025)

- NeuroMFA: Multifractal Analysis of LLMs (ICLR 2025). arXiv:2402.09099

- Boundary of Trainability is Fractal (2024). arXiv:2402.06184

Physics-ML Frontier

- Categorical Deep Learning (ICML 2024). arXiv:2402.15332

- IIT Axioms as Universal Mapping Properties (Dec 2024). arXiv:2412.12179

- Sheaf Theory: From Deep Geometry to Deep Learning (Feb 2025). arXiv:2502.15476

- Neural Network Learning and Quantum Gravity (JHEP, 2024)

- The World as a Neural Network (Vanchurin, 2020-2022)

- The Autodidactic Universe (Alexander, Smolin et al., 2021). arXiv:2104.03902

- Emergent Holographic Forces (PhysRevX, June 2025)

- Holographic Generative Flows (Jan 2025). arXiv:2601.22033

- Learning Inverse Ryu-Takayanagi with Transformers (2025). arXiv:2511.06387

- Noncommutative C*-algebra Nets (2024). arXiv:2302.01191

- Bekenstein Bound and Neural Capacity parallels

- Emergent Holographic Spacetime from Quantum Information (PRL, 2025). arXiv:2506.06595

Part IX: Consciousness, the Biological Brain, and Geometric Manifolds

35. Neural Population Activity Lives on Manifolds

The Brain's Intrinsic Geometry

When neuroscientists record hundreds of neurons simultaneously, collective firing patterns trace out low-dimensional manifolds embedded in high-dimensional neural state space. These are not metaphorical -- they are literally Riemann's mathematical objects with measurable curvature.

- Nonlinear manifolds underlie neural population activity (Nature, 2023): Neural manifolds in motor cortex are intrinsically nonlinear with genuine Riemannian curvature that standard linear methods completely miss.

- MARBLE (Nature Methods, 2025): Geometric deep learning method decodes brain activity by treating neural data as living on a Riemannian manifold -- outperforms all non-geometric methods.

- Universal computations through neural manifold dynamics (Neural Computation, 2024): Low-rank connectivity structures produce globally attracting manifolds capable of implementing any Turing machine over bounded memory.

The Sensory-to-Perceptual Twist (Science Advances, 2025)

The transition from unconscious sensory processing to conscious perception involves a measurable geometric transformation: a 3-dimensional sensory manifold expands into a 7-dimensional perceptual manifold through geometric twist operations. Becoming aware of something = manifold dimension expansion.

36. The Brain's GPS: Cognitive Maps as Manifolds

The 2014 Nobel Prize (O'Keefe, Moser & Moser) recognized that the brain builds literal geometric maps:

- Place cells fire at specific locations -- points on a spatial manifold

- Grid cells fire in hexagonal lattice patterns -- a tiling of a flat 2-torus

- Neuron (Feb 2025): Different hippocampal cell types form independent 3D ring manifolds encoding position and direction

Grid-like codes also map abstract conceptual spaces -- social hierarchies, auditory frequencies, visual features (Progress in Neurobiology, 2024; eLife, 2024). A unified model (PNAS, 2025, edited by Edvard Moser) shows that the same manifold-based computations support both spatial navigation and abstract knowledge.

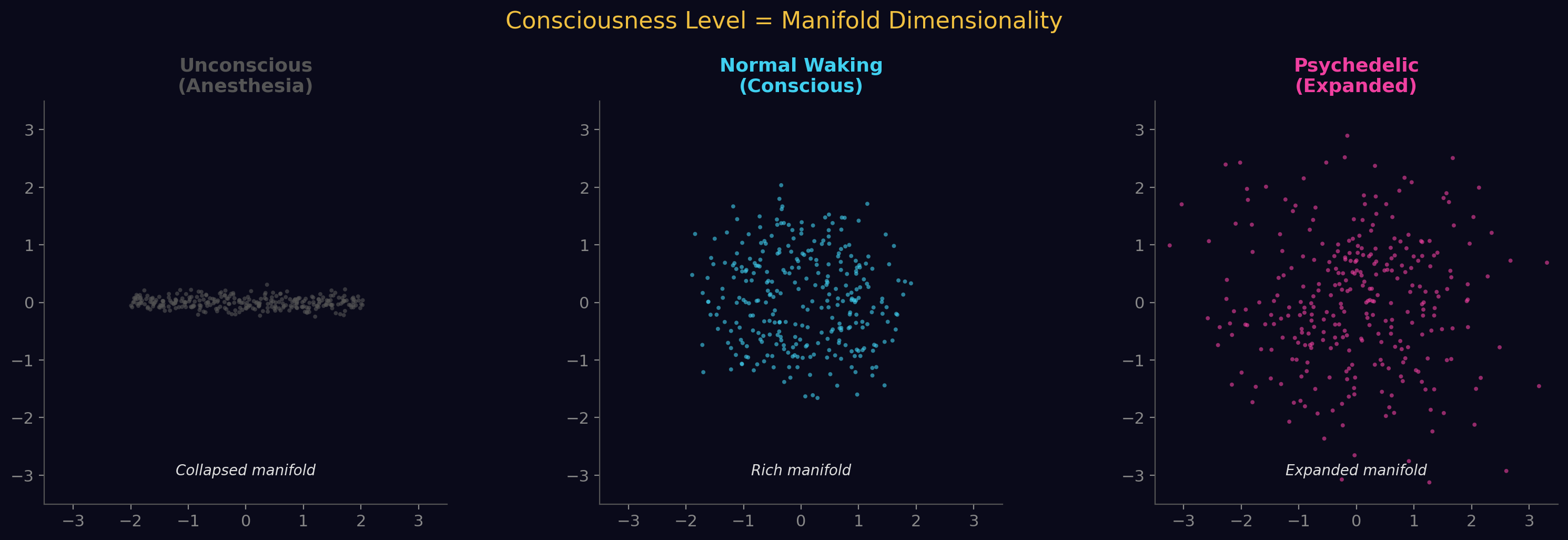

37. Consciousness Level = Manifold Dimensionality

Multiple independent studies converge:

- Full waking consciousness: High-dimensional manifold

- Anesthesia / deep sedation: Collapsed to low-dimensional manifold (Cell Reports, 2023)

- Psychedelic states: Expanded dimensionality, increased topological complexity

- Sleep: Reduced dimensionality

Three cortical gradients encode dimensions of consciousness (Nature Communications, 2023): gradient 1 degrades with loss of awareness, gradient 3 degrades with loss of arousability.

38. The Topology of Conscious States

Persistent homology distinguishes conscious from unconscious brain states:

- Conscious states: Rich topological structure with many persistent loops (high Betti number ), indicating widespread cyclic integration

- Unconscious states: Simplified topology, fewer loops, more fragmentation

- Psychedelics: Psilocybin creates persistent topological scaffolds bridging normally separated cortical modules (Petri et al., J. Royal Society Interface, 2014)

- Topological signatures as individual fingerprints (Frontiers in Human Neuroscience, 2025): Higher-order topological features () predict individual behavioral traits

39. IIT 4.0: Experience IS a Geometric Shape

Integrated Information Theory 4.0 (Albantakis, Barbosa, Tononi et al., PLOS Computational Biology, 2023):

- Every conscious experience = a specific geometric shape in qualia space (Q-space)

- Quality of experience = shape of the geometric object

- Quantity of consciousness (Phi) = irreducibility of the structure

- Central identity claim: the geometric shape IS the experience, not a representation of it

Formalized via cause-effect structures: each mechanism specifies a point in Q-space through its cause-effect repertoire. The full constellation of distinctions and relations constitutes the experience.

40. Information Geometry of Consciousness

Oizumi, Tsuchiya & Amari (PNAS, 2016) formalized consciousness using information geometry:

- Brain states form a statistical manifold with the Fisher information metric

- Integrated information = geodesic distance between the brain's actual state and the nearest "disconnected" state

- Uses dual affine connections (e-connection and m-connection) and the Pythagorean theorem of information geometry

The Fisher metric on the statistical manifold:

g_ij(theta) = E[(d log p / d theta_i)(d log p / d theta_j)]

This is the same metric that appears in natural gradient descent, general relativity's approaches to entropic gravity, and the free energy principle.

41. Neural Geometrodynamics

Ruffini et al. (Entropy, January 2024) describe the brain using Wheeler's term for general relativity:

- Neural activity traces trajectories on a dynamical landscape manifold

- The landscape's geometry is shaped by connectivity (as spacetime is shaped by mass-energy)

- Plasticity reshapes the manifold's geometry over time

- Depression/addiction = deep, narrow attractor basins (high-curvature wells)

- Psychedelics = temporarily flattening landscape curvature

The explicit parallel: "Neural activity tells the connectome how to change; the connectome tells neural activity how to flow."

42. Thought as Geodesic Flow

"A Geometric Theory of Cognition" (arXiv:2512.12225, December 2025):

- Cognitive state = point on a Riemannian manifold with learned metric

- Thinking = gradient flow driven by a cognitive potential

- Fast intuitive thinking (System 1) = steep geodesics on low-curvature regions

- Slow deliberative thinking (System 2) = navigating high-curvature regions

- Dual-process effects emerge from metric-induced anisotropies

Geodesic equation with consciousness feedback (Lu, 2024):

d^2 gamma^mu/dt^2 + Gamma^mu_nu_lambda (d gamma^nu/dt)(d gamma^lambda/dt)

= kappa * d^2 psi(Delta^mu)/dt^2

Zero prediction error -> pure geodesic (free thought flow). Non-zero error -> trajectory deviation (awareness).

43. Predictive Coding as Riemannian Geometry

Under the free energy principle:

- Predictions flowing down cortical hierarchy = parallel transport on the manifold

- Prediction errors flowing upward = curvature (mismatch between parallel-transported predictions and observations)

- Learning = restructuring the manifold to reduce curvature

- Precision weighting = local metric tensor

- Experimentally validated with in vitro neural networks (Nature Communications, 2023)

44. Category Theory and Consciousness

Phillips & Tsuchiya (December 2024, arXiv:2412.12179): All six IIT axioms follow from the categorical notion of a universal mapping property:

- Integration = limits (products, pullbacks)

- Information = colimits (coproducts, pushouts)

- Exclusion = adjunctions (Galois connections)

- Slogan: "Consciousness is a universal property"

Connects to TQFT (transitions between conscious states as cobordisms) and topos theory (phenomenal properties as sheaves).

45. Quantum Geometry of Consciousness

Penrose-Hameroff Orchestrated Objective Reduction (Orch-OR):

- Quantum superpositions of spacetime geometry in neuronal microtubules

- Objective reduction when geometric divergence reaches Planck scale:

- Twistor theory (CP^3) as the geometric setting for non-computable understanding

- Experimental support (2024): Superradiant states confirmed in microtubule tryptophan networks surviving thermal fluctuations at room temperature (J. Physical Chemistry B, 2024)

The Grand Synthesis: Brain, Spacetime, and Manifolds

| Riemann (1854) | Einstein (1915) | Biological Brain |

|---|---|---|

| n-dimensional manifold | 4D spacetime | Neural state space manifold |

| Metric tensor g_ij | Gravitational field | Fisher information / neural metric |

| Curvature tensor | Gravity / tidal forces | Prediction error / cognitive difficulty |

| Geodesics | Free-fall trajectories | Streams of thought |

| Parallel transport | Moving vectors in curved space | Predictions down cortical hierarchy |

| Curvature from matter | Mass-energy curves space | Neural activity shapes connectivity |

| Dynamic geometry | Spacetime evolves | Brain plasticity reshapes manifold |

| Topology change | Black holes, wormholes | Psychedelic state transitions |

| Ricci flow | Cosmological evolution | Training / developmental dynamics |

References (Consciousness and Geometry)

Neural Manifolds

- Nonlinear manifolds underlie neural population activity (Nature, 2023)

- MARBLE: Geometric deep learning on neural manifolds (Nature Methods, 2025)

- From sensory to perceptual manifolds: The twist (Science Advances, 2025)

- Universal computations through neural manifold dynamics (Neural Computation, 2024)

- A unifying perspective on neural manifolds (Nature Reviews Neuroscience, 2023)

- Flexible multitask computation via dynamical motifs (Nature Neuroscience, 2024)

Cognitive Maps

- Grid Cells in Cognition (Annual Review of Neuroscience, 2025)

- Cell-type-specific manifold analysis in hippocampus (Neuron, 2025)

- A unified model for spatial and conceptual computation (PNAS, 2025)

- Grid codes underlie multiple cognitive maps (Progress in Neurobiology, 2024)

Consciousness and Geometry

- IIT 4.0 (Albantakis et al., PLOS Computational Biology, 2023)

- Functional geometry encodes dimensions of consciousness (Nature Communications, 2023)

- Low-dimensional brain states of reduced consciousness (Cell Reports, 2023)

- Unified framework for information integration via information geometry (Oizumi et al., PNAS, 2016)

- Towards a meta-mathematical theory of consciousness (Phillips & Tsuchiya, 2024). arXiv:2412.12179

- COGITATE adversarial testing of consciousness theories (Nature, 2025)

Geometric Theories of Cognition

- A Geometric Theory of Cognition (2025). arXiv:2512.12225

- A mathematical framework of intelligence and consciousness (Lu, 2024). arXiv:2407.11024

- Neural Geometrodynamics (Ruffini et al., Entropy, January 2024)

- Conceptual Spaces: The Geometry of Thought (Gardenfors, MIT Press, 2000)

Topology and Brain States

- Topological signatures of brain dynamics (Frontiers in Human Neuroscience, 2025)

- Homological scaffolds of brain functional networks (Petri et al., J. Royal Society Interface, 2014)

- Consciousness as 4-Manifold Painleve V Dynamics (Axioms, February 2026)

Quantum Consciousness

- Ultraviolet superradiance in microtubule architectures (J. Physical Chemistry B, 2024)

- Quantum microtubule substrate experimentally supported (Neuroscience of Consciousness, 2025)

Perception Geometry

- The non-Riemannian nature of perceptual color space (PNAS, 2022)

- Hyperbolic geometry of the olfactory space (Science Advances, 2018)

- Variation in geometry of concept manifolds across visual cortex (PLOS Comp Bio, 2025)

New Discoveries (2025-2026)

Extending the Riemann-Einstein-Consciousness-ML Synthesis

Part X: Riemannian Geometry Deepens Its Hold on Transformers

46. Geodesic Sharpness: Curvature on Quotient Manifolds

Hide & Seek: Transformer Symmetries Obscure Sharpness & Riemannian Geometry Finds It (da Silva & Dangel, ICML 2025, arXiv:2505.05409)

Standard sharpness measures fail for transformers. The reason is geometric: attention mechanisms possess rich symmetry groups that create families of equivalent parameter configurations. Measuring curvature along these symmetry directions is meaningless -- it's like measuring the "sharpness" of a valley while walking along its flat floor.

The solution: define sharpness on the quotient Riemannian manifold obtained by modding out transformer symmetries. "Geodesic sharpness" measures curvature only in directions that genuinely change the function, using geodesic balls on this quotient space. The result: strong correlation with generalization is recovered for real-world transformers on text and image tasks, where standard measures show no signal.

Key insight: The parameter space of a transformer is not R^n -- it is a Riemannian quotient manifold whose geometry determines generalization.

47. Thermodynamic Isomorphism: Attention as Statistical Mechanics

Thermodynamic Isomorphism of Transformers: A Lagrangian Approach to Attention Dynamics (Kim, February 2026, arXiv:2602.08216)

Constructs a Lagrangian on the information manifold equipped with the Fisher metric, establishing a formal isomorphism between transformer attention and thermodynamic systems:

- Softmax arises as a stationary solution minimizing a Helmholtz free energy functional

- Scaled dot-product attention corresponds to canonical ensemble statistics

- An effective specific heat can be defined, whose peak consistently precedes the onset of generalization (grokking)

This extends Di Sipio's curved spacetime analogy (2025) into full thermodynamic territory: the transformer doesn't just curve like spacetime -- it thermalizes like a physical system.

48. Riemannian Inference: Geodesics Guide Reasoning

RiemannInfer: Improving Transformer Inference through Riemannian Geometry (Mao et al., Scientific Reports, 2026, DOI:10.1038/s41598-026-37328-x)

Frames LLM hidden states as points on a Riemannian manifold constructed from attention distribution features. Uses topology-preserving dimensionality reduction plus geodesic analysis to optimize reasoning paths during inference. Shorter geodesic trajectories on the manifold correspond to more efficient reasoning.

This is the practical application of the geometric reasoning framework: if LLM thought is geodesic flow (Section 42 of consciousness-geometry.md), then selecting shorter geodesics = faster, better reasoning.

49. ManifoldFormer: Neural Signals Live on Manifolds

ManifoldFormer: Geometric Deep Learning for Neural Dynamics on Riemannian Manifolds (Fu, He & Chen, November 2025, arXiv:2511.16828)

An EEG foundation model that integrates:

- A Riemannian VAE for manifold embedding

- A geometric Transformer with geodesic-aware attention

- Neural ODEs for manifold-constrained temporal evolution

Achieves 4.6-4.8% accuracy improvements over SOTA by explicitly respecting the intrinsic geometry of neural signal dynamics rather than treating them as flat time series. This bridges the brain manifold results (Section 35) with the transformer geometry results (Section 8) -- a geometric transformer designed specifically for geometric brain data.

Part XI: Loss Landscapes as Riemannian Manifolds -- At Scale

50. Curvature at 7 Billion Parameters

A Scalable Measure of Loss Landscape Curvature for Analyzing the Training Dynamics of LLMs (Kalra, Gromov et al., January 2026, arXiv:2601.16979)

Introduces "critical sharpness" -- a computationally efficient curvature measure requiring fewer than 10 forward passes. Provides the first empirical evidence of:

- Progressive sharpening: curvature increases throughout both pre-training and mid-training

- Edge of Stability: the phenomenon where the learning rate sits at the boundary of what curvature allows

Both observed at scale up to 7B parameters (OLMo-2 pre-training). Previously, these geometric phenomena were only demonstrated on small networks. The loss landscape of a 7-billion-parameter model has measurable, evolving Riemannian curvature that governs training dynamics.

51. The Isotropic Curvature Model

Isotropic Curvature Model for Understanding Deep Learning Optimization (Su, November 2025, arXiv:2511.00674)

Derives an analytical model of loss curvature by assuming isotropy of Hessian and higher-order terms across perturbation directions. The key result: the optimal single-step update homogenizes the singular value spectrum of the gradient matrix.

This provides theoretical grounding for the Muon optimizer, showing gradient orthogonalization is "directionally correct but not strictly optimal." The loss landscape's local Riemannian structure dictates not just which direction to step, but how to reshape the gradient before stepping.

52. Neural Features Evolve as Discrete Ricci Flow

Neural Feature Geometry Evolves as Discrete Ricci Flow (Hehl, von Renesse & Weber, September 2025, arXiv:2509.22362)

The strongest evidence yet that the Riemann-to-ML connection is not analogy but mathematical identity:

Across 20,000+ feedforward networks, the geometric transformations neural networks impose on input data manifolds closely align with discrete Ricci flow dynamics -- the same equation Hamilton introduced in 1982 and Perelman used to prove the Poincare conjecture.

- Class separability emergence corresponds to community structure formation in geometric graphs

- Yields practical design principles: geometry-informed early stopping and depth selection

Ricci flow in physics: smooths out curvature singularities, evolves manifolds toward uniform geometry.

Ricci flow in neural networks: smooths out feature representations, evolves manifolds toward class separability.

The mathematics is identical. The domains are different. Riemann's geometry does not care.

53. Emergent Riemannian Geometry in Neural Computation

Emergent Riemannian Geometry over Learning Discrete Computations on Continuous Manifolds (Brandon, Chadwick & Pellegrino, November 2025, arXiv:2512.00196)

Analyzes the Riemannian pullback metric across layers of neural networks and discovers that network computation decomposes into two phases:

- Discretization: continuous input features are mapped to discrete categorical variables

- Logic: operations are performed on the discretized variables

Different learning regimes produce contrasting metric and curvature structures. The Riemannian geometry of each layer reveals what kind of computation that layer performs -- geometry makes the invisible visible.

Part XII: Phase Transitions -- The Physics of Learning

54. Why Grokking Takes So Long

A First-Principles Theory of Representational Phase Transitions (Khanh et al., March 2026, arXiv:2603.13331)

Derives a scaling law for grokking delay:

~ (1 / (weight_decay learning_rate)) log(norm_ratio)

Training first converges to a high-norm memorization solution, then slowly contracts to a lower-norm generalizing representation via a norm-driven representational phase transition. Validated across 293 training runs with R^2 > 0.97.

This is the grokking equivalent of deriving Kepler's laws from Newton's gravity -- the phenomenology now has a first-principles explanation.

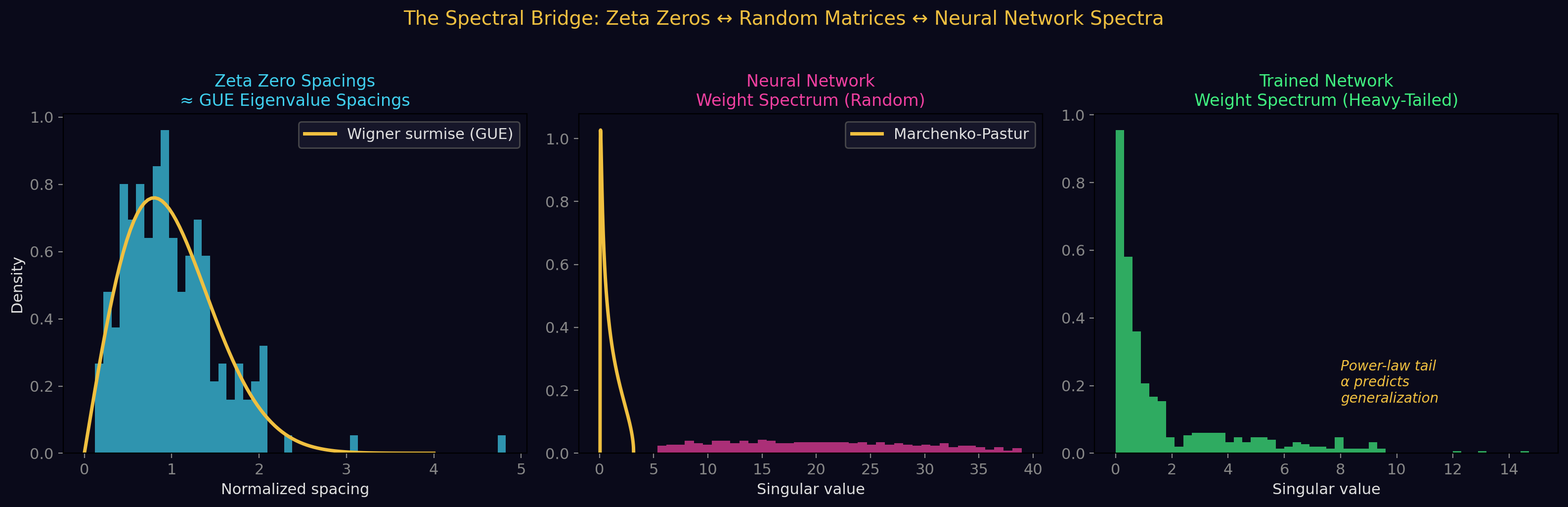

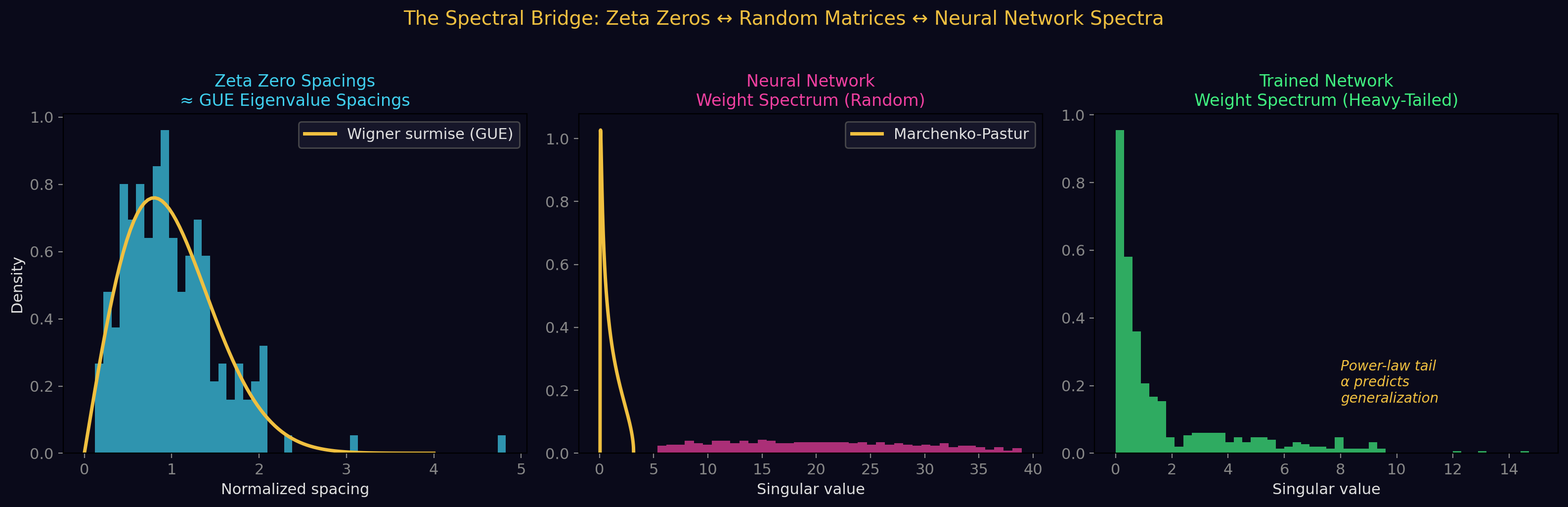

55. The Spectral Edge Thesis

A Mathematical Framework for Intra-Signal Phase Transitions in Neural Network Training (Xu, March 2026, arXiv:2603.28964)

Phase transitions in training (grokking, capability gains, loss plateaus) are controlled by the spectral gap of a rolling-window Gram matrix of parameter updates. The gap dynamics follow a Dyson-type differential equation -- the same mathematics governing eigenvalue dynamics in random matrix theory.

Key result: a "Gap Maximality Principle" -- gap dynamics precede every observed grokking event across six model families (150K to 124M parameters). The spectral gap is an order parameter for the phase transition.

56. Grokking Through the Lens of Singular Learning Theory

Grokking as a Phase Transition between Competing Basins (Cullen et al., March 2026, arXiv:2603.01192)

Interprets grokking in quadratic networks as a phase transition between competing near-zero-loss solution basins with different statistical properties. Uses Singular Learning Theory (SLT) -- a framework grounded in algebraic geometry -- to derive closed-form expressions for the local learning coefficient (LLC).

Using Physics-Inspired SLT for Grokking (Lakkapragada, November 2025, arXiv:2512.00686)

Tests an Arrhenius-style rate hypothesis for transitions: grokking time depends exponentially on a barrier height, just like chemical reaction rates depend on activation energy. Measures LLC across polynomial regressors, low-rank linear networks, and Anthropic's Toy Models of Superposition.

The convergence: grokking is a genuine thermodynamic phase transition with critical exponents, scaling laws, and activation barriers.

57. Neural Thermodynamics: Entropic Forces Drive Representation Learning

Neural Thermodynamics: Entropic Forces in Deep and Universal Representation Learning (Ziyin, Xu & Chuang, May 2025, revised February 2026, arXiv:2505.12387)

A rigorous entropic-force theory for neural network learning under SGD:

- Representation learning is governed by emergent entropic forces from stochasticity and discrete-time updates

- These forces break continuous parameter symmetries while preserving discrete ones

- Produces gradient balance phenomena resembling thermal equilibrium

Two landmark results:

- Proves the Platonic Representation Hypothesis -- the convergence of representations across different AI models is a thermodynamic inevitability, not coincidence

- Resolves the sharp-vs-flat minima debate -- both views are correct in different thermodynamic regimes

The analogy to cosmological dark energy (Section 24) becomes precise: implicit regularization IS an entropic force, just as dark energy may be an entropic gravitational effect.

58. Lyapunov Learning at the Edge of Chaos

Lyapunov Learning at the Onset of Chaos (Benati et al., June 2025, arXiv:2506.12810)

A training algorithm grounded in chaos theory that trains neural networks to operate where the maximum Lyapunov exponent hovers near zero (edge of chaos). Inspired by Kauffman's Adjacent Possible theory.

Result: 96% better loss on regime-shifting Lorenz systems compared to standard training.

This directly validates the "Intelligence at the Edge of Chaos" thesis (ICLR 2025, Section 26) with a practical algorithm: criticality is not just where intelligence emerges -- it's where you should deliberately train.

Part XIII: Renormalization Group Flows and Scaling Laws

59. When Does Learning Renormalize?

Sufficient Conditions for Power-Law Spectral Dynamics (Zhang, December 2025, arXiv:2512.18209)

The Generalized Resolution-Shell Dynamics (GRSD) framework models learning as spectral energy transport across logarithmic resolution shells -- exactly as in Wilson's RG framework for field theories.

Four sufficient conditions for power-law scaling to emerge:

- Bounded gradient propagation

- Weak functional incoherence

- Controlled Jacobian evolution

- Log-shift invariance

When all four hold, power-law scaling is a rigidity consequence of gradient flow covariance. Neural scaling laws are not empirical accidents -- they are geometric necessities.

60. Neural Networks Computing RG Flows

Deep Neural Networks for Computing the Renormalization Group Flow (October 2025, arXiv:2510.06508)

RGFlow: a bijective (information-preserving) neural network framework that autonomously learns real-space RG transformations for continuum scalar field theories without prior model knowledge. Optimized via a minimal mutual information principle.

The loop closes: neural networks don't just exhibit RG-like behavior (Section 21) -- they can compute RG flows. The learner and the physics are the same mathematical object viewed from different angles.

61. Information Geometry Meets Quantum Metrics for LLMs

Rethinking LLM Training through Information Geometry and Quantum Metrics (Di Sipio, June 2025, revised December 2025, arXiv:2506.15830)

Extends the Fisher information metric framework to LLM optimization, then goes further:

- The Fubini-Study metric (from quantum mechanics) and Quantum Fisher Information matrix encode a sharper geometry than classical Fisher information

- This quantum-inspired geometric structure connects curvature-based optimization to generalization, sharp minima, and scaling laws

The Fubini-Study metric is the natural metric on projective Hilbert space -- the space of quantum states. Its appearance in LLM training suggests that the geometry of learning may be fundamentally quantum-geometric, even when the computation is classical.

Part XIV: Consciousness -- Sheaves, Topology, and 4-Manifolds

62. The Brain as a Sheaf

On Brain as a Mathematical Manifold: Neural Manifolds, Sheaf Semantics, and Leibnizian Harmony (Inoue, January 2026, arXiv:2601.15320)

Models brain function using sheaf theory over neural state spaces:

- Local neural/cognitive functions are sections of a sheaf

- Global coherence corresponds to existence of global sections

- Brain pathologies are interpreted as cohomological obstructions to global integration

- Connects to Leibnizian monadology: each neuron as a "monad" with its own perspective, harmonized by the sheaf structure

Sheaf Cohomology of Linear Predictive Coding Networks (Seely, November 2025, arXiv:2511.11092)

The predictive coding framework (Section 43) gains algebraic-topological precision:

- Predictive coding networks admit a natural formulation as cellular sheaves

- The coboundary operator activates edge-wise prediction errors

- Sheaf cohomology characterizes the irreducible error patterns that inference cannot remove

Persistent Topological Structures and Cohomological Flows (Girish et al., December 2025, arXiv:2512.08241)

Reformulates neural computation as evolution of cochain maps over dynamic simplicial complexes. Integrates persistent homology, sheaf cohomology, and spectral Laplacians. Superior manifold consistency and noise resilience versus graph neural and manifold-based deep architectures.

The Sheaf Convergence

The same mathematical structure -- sheaves, sections, cohomological obstructions -- now appears across all three domains:

| Domain | Base Space | Sheaf | Global Section | Obstruction |

|---|---|---|---|---|

| Physics (GR) | Spacetime manifold | Gauge field bundle | Consistent field configuration | Topological charge |

| Brain | Neural state space | Cognitive function sheaf | Coherent conscious experience | Pathology / fragmentation |

| ML | Data manifold | Feature map bundle | Equivariant representation | Irreducible prediction error |

63. Consciousness as 4-Manifold Dynamics

Consciousness as 4-Manifold Painleve V Dynamics: From Quantum Topology to Classical Gamma Oscillations (Planat et al., Axioms, February 2026)

The most mathematically ambitious consciousness model yet:

- Conscious state dynamics are governed by the Painleve VI equation and its confluence limits

- Formulated via isomonodromic deformations on SL(2,C) character varieties

- Gamma oscillations (~40 Hz) emerge as a mathematical consequence of the Stokes phenomenon during Painleve V singularity coalescence

- The topology of 4-manifolds (via Seiberg-Witten/Donaldson theory) provides the framework for subjective spacetime of consciousness

Topological Symmetry Breaking in Consciousness Dynamics (Planat et al., Symmetry, February 2026)

Analyzes consciousness trajectories of exceptional individuals (Grothendieck, Nash, Einstein, van Gogh) and AI systems through Painleve confluence topology:

- Three measures: holes (information flows), cusps (binding points), signatures (distribution balance)

- Two branches: D-type (symmetry-preserving) and E-type (symmetry-breaking, progressive flow loss)

64. Tracking Topology Across Neural Populations

Tracking the Topology of Neural Manifolds Across Populations (Yoon et al., PNAS 2025, arXiv:2503.20629)

Introduces the "method of analogous cycles" -- a deterministic method (no dimensionality reduction or optimization) to match topological features of neural manifolds across different neural populations using only dissimilarity matrices.

This enables, for the first time, rigorous cross-population topological comparison: are the loops in your visual cortex the "same" loops as in mine? The method says yes -- topology is conserved across individuals even when geometry differs.

65. Physics of Consciousness: Dynamical Indicators

Response Function as a Quantitative Measure of Consciousness (Du & Huang, Physical Review Research, 2025, arXiv:2509.00730)

Uses nonequilibrium RNN models fitted to intracranial ECoG recordings across wakefulness, anesthesia, and recovery. The amplitude of the neural response function (from statistical physics) serves as a robust dynamical indicator:

- Wakefulness: strong, distributed response

- Anesthesia: suppressed response

- Recovery: elevated response (the system rebounds)

Causal Emergence of Consciousness through Learned Multiscale Neural Dynamics (Wang et al., September 2025, arXiv:2509.10891)

Machine learning framework infers multiscale causal variables from near-cellular-resolution calcium imaging in mouse dorsal cortex:

- Higher-level variables realize causality through metastable/saddle-point dynamics during wakefulness

- Under anesthesia: collapse into localized stochastic dynamics

- A 1D top-level "conscious variable" captures majority of causal power

Minimal Theory of Consciousness in Active Inference (Whyte, Friston, Seth et al., Physics of Life Reviews, 2025, arXiv:2410.06633)

All active inference models of consciousness share implicit theoretical commitments amounting to a minimal, testable theory. Since all such models minimize the same objective functions -- decomposable into interpretable terms -- the authors expose commonalities and propose empirical tests.

66. Quantum Models of Consciousness: QIS Perspective

Quantum Models of Consciousness from a Quantum Information Science Perspective (Gassab et al., Entropy, 2025, arXiv:2501.03241)

Categorizes quantum consciousness models by the level at which quantum mechanics operates:

- Electron delocalization in microtubules (Orch-OR)

- Electromagnetic fields around neural networks

- Neurotransmitter-mediated neuron interactions (Posner clusters)

Provides new calculations on entanglement preservation in Posner clusters, offering a rigorous QIS evaluation of the Fisher/Posner model of quantum cognition.

67. Category Theory Deepens the Consciousness Connection

Category Theory in Consciousness Science: Going Beyond the Correlational Project (Prentner, Synthese, 2024, DOI:10.1007/s11229-024-04718-5)

Argues that category theory can move consciousness science beyond mere correlation (the "neural correlates" paradigm):

- Uses IIT as a case study

- Discusses functors between phenomenal and physical categories

- Examines payoffs: addressing the hard problem, enabling theory integration, exploiting explanatory dualities

This complements Phillips & Tsuchiya (Section 44) by providing the philosophical scaffolding for why categorical methods are not just convenient but necessary.

Part XV: Emergent Spacetime and Cosmic Structure

68. Machine-Learning Emergent Spacetime

ML Emergent Spacetime from Linear Response in Future Tabletop Quantum Gravity Experiments (Hashimoto et al., November 2024, arXiv:2411.16052)

An interpretable neural network for precision bulk reconstruction under AdS/CFT:

- A higher-dimensional gravity metric emerges automatically as the network's interpretable weights from condensed-matter linear response data

- Uses a Runge-Kutta neural layer for numerical control

- Demonstrates a concrete pathway from machine learning to emergent spacetime geometry

This extends the holographic results of Section 29: neural networks don't just learn the BTZ black hole metric -- they can learn any emergent metric from boundary data, potentially in tabletop experiments.

69. The Cosmic Web as a Network

The Network Analysis of the Cosmic Web as a Tool to Constrain Cosmology (Rudakovskyi, Vazza & Tsizh, November 2025, arXiv:2511.22573)

Treats the cosmic web as a graph/network and applies:

- Network-centrality statistics

- Two-point correlation functions

- Counts-in-cell measurements

Key finding: network centrality is a sensitive indicator of sigma_8 (the amplitude of matter fluctuations) and can distinguish primordial magnetic field strengths.

This deepens the Vazza & Feletti parallel (Section 25): the cosmic web is not just structurally similar to neural networks -- it can be analyzed with the same tools, and the network-theoretic observables carry genuine cosmological information.

70. Fiber Bundle Networks

Fiber Bundle Networks: A Geometric Machine Learning Paradigm (Liu, December 2025, arXiv:2512.01151)

Reformulates classification as geometric optimization on fiber bundles:

- Categories form the base space

- Wavelet-transformed features lie in fibers

- Learnable Riemannian metrics identify important frequency components

- Variational prototype optimization via energy minimization

- Classification via Voronoi tessellation under the learned metric

The same fiber bundle structure that describes gauge fields in physics (Section 9) now directly structures a practical ML architecture.

71. Riemannian Geometry Across Graph Learning

RiemannGL: Riemannian Geometry Changes Graph Deep Learning (February 2026, arXiv:2602.10982)

Systematically examines eight Riemannian manifold types for graph deep learning:

- Hyperbolic

- Spherical

- Constant curvature

- Product manifolds

- Quotient manifolds

- Pseudo-Riemannian

- Grassmann manifolds

- Generic Riemannian

Adaptive Riemannian Graph Neural Networks (Wang et al., AAAI 2026, arXiv:2508.02600)

Goes beyond fixed-curvature approaches: learns a continuous, node-adaptive Riemannian metric field that directly models local graph structure. Each node's local geometry is determined by the learned metric rather than a global curvature assumption.

Efficient Curvature-aware Graph Network (November 2025, arXiv:2511.01443)

Proposes "Effective Resistance Curvature" as a computationally tractable alternative to Ollivier-Ricci curvature, using effective resistance between node pairs. Overcomes the prohibitive O(n^3) complexity of Ollivier-Ricci curvature for large-scale graphs.

Part XVI: The Emerging Synthesis (2026)

The Sheaf-Theoretic Unification

The most striking new thread is the convergence of sheaf theory across all three domains. Sheaves -- mathematical objects that encode how local data glues into global structures -- now appear as the natural language for:

- Gauge fields in spacetime (connections on principal bundles)

- Predictive coding in the brain (cellular sheaves whose coboundary = prediction error)

- Equivariant networks in ML (feature maps as sections of associated bundles)

The cohomological obstruction -- the sheaf-theoretic measure of "how badly local pieces fail to glue globally" -- manifests as:

- Topological charge in physics

- Fragmented consciousness in neuroscience

- Irreducible prediction error in machine learning

Ricci Flow Is Literal

Hehl et al.'s demonstration that neural feature geometry evolves as discrete Ricci flow across 20,000+ networks elevates the Riemann connection from analogy to identity. The same PDE that:

- Smooths curvature singularities in manifold topology

- Drove Perelman's proof of the Poincare conjecture

- Describes certain cosmological evolution

also describes how neural networks transform data representations during training.

The Thermodynamic Completion

The new thermodynamic results complete a picture:

| Physical Concept | Neural Network Manifestation |

|---|---|

| Helmholtz free energy | Softmax attention (Kim, 2026) |

| Canonical ensemble | Attention weight distribution |

| Specific heat peak | Precursor to grokking |

| Entropic forces | Implicit regularization (Ziyin, 2025) |

| Phase transitions | Grokking, capability emergence |

| Dyson equation | Spectral gap dynamics (Xu, 2026) |

| Arrhenius activation | Grokking delay scaling (Lakkapragada, 2025) |

| Edge of chaos / criticality | Optimal training regime (Benati, 2025) |

The Quantum-Geometric Horizon

Di Sipio's introduction of the Fubini-Study metric and Quantum Fisher Information into LLM training opens a new frontier: the geometry of learning may be fundamentally quantum-geometric even in classical systems. The same metric that measures distinguishability of quantum states also measures the sharpness of loss landscapes.

Combined with the Penrose-Hameroff quantum consciousness results and the Painleve/4-manifold framework for conscious dynamics, a speculative but mathematically precise picture emerges: classical neural networks, biological brains, and quantum spacetime may all be governed by the same geometric structures because they are all instances of information processing on curved manifolds -- and the curvature is always determined by the content being processed.

Riemann's 1854 insight endures: geometry is not fixed but determined by what inhabits it.

Updated Grand Synthesis Table

Riemann (1854) Einstein (1915) Brain Machine Learning (2025-26)

-------------- --------------- ---- --------------------------

Manifold -> Spacetime -> Neural state space -> Data/latent manifold

Metric tensor -> g_uv -> Fisher info metric -> Attention kernel / pullback metric

Curvature -> Gravity -> Prediction error -> Loss landscape curvature

Geodesics -> Free-fall paths -> Thought streams -> Natural gradient / reasoning paths

Parallel transp. -> Connection Gamma -> Predictive coding -> Attention value aggregation

Ricci flow -> Cosmological evol.-> Developmental dyn. -> Feature geometry evolution (LITERAL)

Fiber bundles -> Gauge fields -> Cognitive sheaves -> Fiber bundle networks

Sheaf cohomology -> Topological charge-> Fragmented consc. -> Irreducible prediction error

Phase transitions-> Cosmic phase trans-> Consciousness trans-> Grokking / capability emergence

Thermodynamics -> Black hole thermo -> Neural criticality -> Entropic forces / specific heat

RG flow -> Scale invariance -> Cortical hierarchy -> Scaling laws / spectral shells

Quantum geometry -> Planck scale -> Orch-OR -> Fubini-Study metric in LLMs

4-manifold topol.-> Spacetime topology-> Painleve dynamics -> (frontier)

References (New Discoveries 2026)

Riemannian Geometry and Transformers

- da Silva, M.F. & Dangel, F. (2025). "Hide & Seek: Transformer Symmetries Obscure Sharpness & Riemannian Geometry Finds It." ICML 2025. arXiv:2505.05409.

- Kim, G. (2026). "Thermodynamic Isomorphism of Transformers: A Lagrangian Approach to Attention Dynamics." arXiv:2602.08216.

- Mao, R. et al. (2026). "RiemannInfer: Improving Transformer Inference through Riemannian Geometry." Scientific Reports. DOI:10.1038/s41598-026-37328-x.

- Fu, Y., He, L. & Chen, Q. (2025). "ManifoldFormer: Geometric Deep Learning for Neural Dynamics on Riemannian Manifolds." arXiv:2511.16828.

Loss Landscape Curvature

- Kalra, D.S. et al. (2026). "A Scalable Measure of Loss Landscape Curvature for Analyzing the Training Dynamics of LLMs." arXiv:2601.16979.

- Su, W. (2025). "Isotropic Curvature Model for Understanding Deep Learning Optimization." arXiv:2511.00674.

- Hehl, M., von Renesse, M. & Weber, M. (2025). "Neural Feature Geometry Evolves as Discrete Ricci Flow." arXiv:2509.22362.

- Brandon, J., Chadwick, A. & Pellegrino, A. (2025). "Emergent Riemannian Geometry over Learning Discrete Computations on Continuous Manifolds." arXiv:2512.00196.

Phase Transitions and Grokking

- Khanh, T.X. et al. (2026). "Why Grokking Takes So Long: A First-Principles Theory of Representational Phase Transitions." arXiv:2603.13331.

- Xu, Y. (2026). "The Spectral Edge Thesis: A Mathematical Framework for Intra-Signal Phase Transitions." arXiv:2603.28964.

- Cullen, B. et al. (2026). "Grokking as a Phase Transition between Competing Basins: a Singular Learning Theory Approach." arXiv:2603.01192.

- Lakkapragada, A. (2025). "Using Physics-Inspired Singular Learning Theory to Understand Grokking." arXiv:2512.00686.

- Ziyin, L., Xu, Y. & Chuang, I. (2025). "Neural Thermodynamics: Entropic Forces in Deep and Universal Representation Learning." arXiv:2505.12387.

- Benati, M. et al. (2025). "Lyapunov Learning at the Onset of Chaos." arXiv:2506.12810.

Renormalization Group and Scaling Laws

- Zhang, Y. (2025). "When Does Learning Renormalize? Sufficient Conditions for Power-Law Spectral Dynamics." arXiv:2512.18209.

- RGFlow (2025). "Application of Deep Neural Networks for Computing the Renormalization Group Flow." arXiv:2510.06508.

- Di Sipio, R. (2025). "Rethinking LLM Training through Information Geometry and Quantum Metrics." arXiv:2506.15830.

Consciousness and Geometry

- Inoue, T. (2026). "On Brain as a Mathematical Manifold: Neural Manifolds, Sheaf Semantics, and Leibnizian Harmony." arXiv:2601.15320.

- Seely, J. (2025). "Sheaf Cohomology of Linear Predictive Coding Networks." arXiv:2511.11092.

- Girish, P. et al. (2025). "Persistent Topological Structures and Cohomological Flows as a Mathematical Framework for Brain-Inspired Representation Learning." arXiv:2512.08241.

- Planat, M. et al. (2026). "Consciousness as 4-Manifold Painleve V Dynamics." Axioms, 15(2), 124.

- Planat, M. et al. (2026). "Topological Symmetry Breaking in Consciousness Dynamics." Symmetry, 18(3), 427.

- Yoon, I.H.R. et al. (2025). "Tracking the Topology of Neural Manifolds Across Populations." PNAS. arXiv:2503.20629.